Token Incentives for High-Quality AI Data Labeling in Blockchain Projects 2026

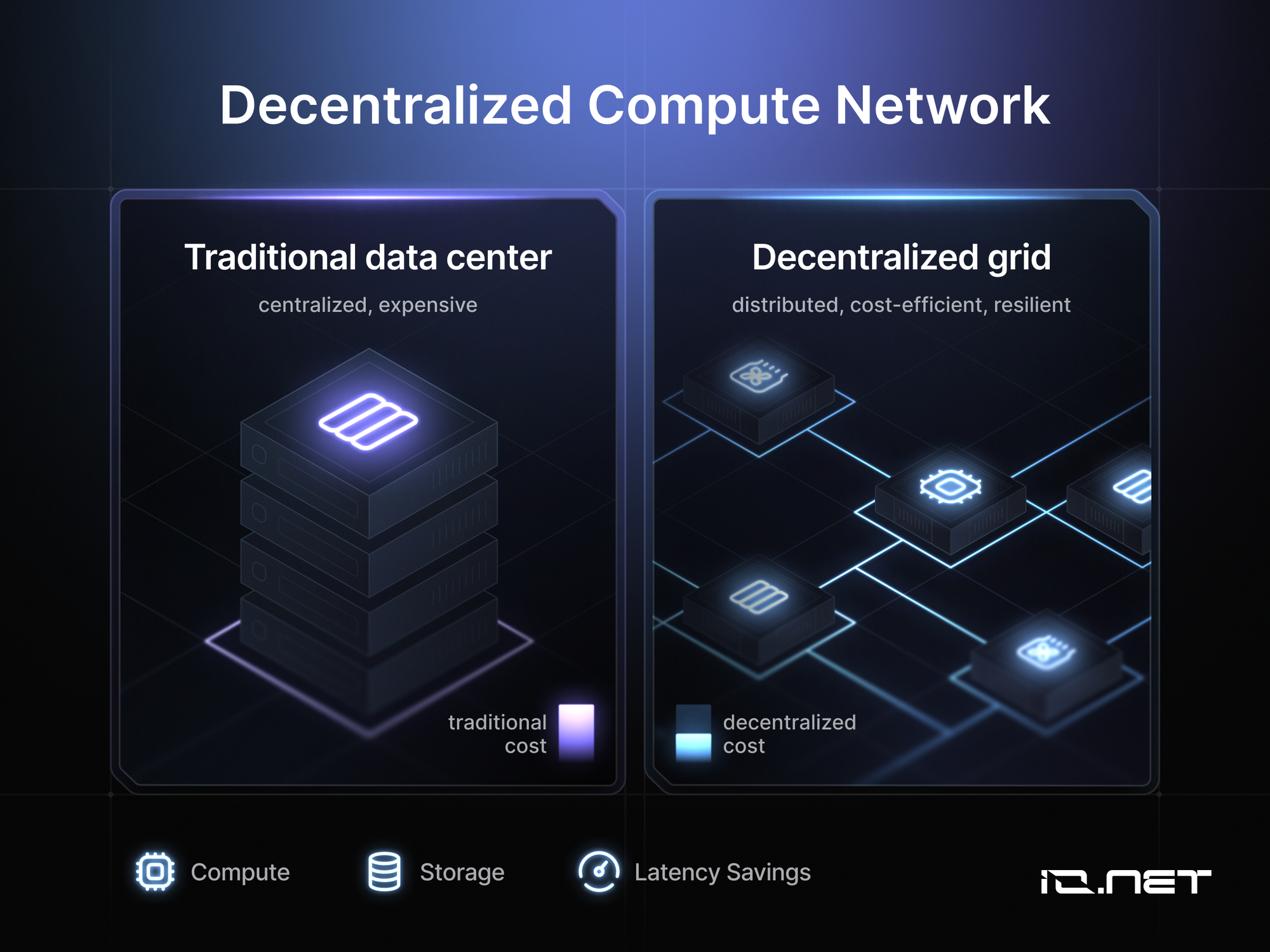

In the accelerating fusion of artificial intelligence and blockchain by early 2026, token incentivized data labeling has solidified as a cornerstone for producing superior AI training datasets. Traditional methods, reliant on centralized platforms and low-paid gig workers, often yield inconsistent annotations riddled with errors. Blockchain projects counter this by deploying native tokens as precise incentives, aligning contributor motivations directly with data accuracy and fostering decentralized AI datasets that scale globally.

This shift matters profoundly. High-stakes AI applications, from autonomous systems to predictive analytics in crypto trading, demand datasets unmarred by bias or imprecision. Tokens, governed by smart contracts, introduce verifiable quality checks; contributors stake assets on their work, facing penalties for subpar output. The result? A self-regulating ecosystem where crypto rewards for AI annotation drive excellence, much like performance-based compensation in elite portfolios rewards sustained value creation.

Overcoming Centralized Data Labeling Pitfalls

Centralized labeling services dominate legacy AI development, but they falter under scrutiny. Scalability stalls as demand surges; quality dips amid rote tasks performed by under-motivated annotators. Costs balloon for specialized domains like medical imaging or multilingual sentiment analysis. In 2026, these frailties expose AI models to cascading failures, eroding trust in deployed systems.

Blockchain disrupts this inertia. By tokenizing contributions, platforms create open marketplaces for Web3 data annotation incentives. Participants worldwide compete for bounties, their reputations encoded on-chain. Peer validation and automated oracles slash fraud, while tokenomics ensure liquidity for ongoing participation. Consider the economics: a contributor earning $LABEL tokens for precise bounding boxes in computer vision tasks can trade them instantly, mirroring efficient capital markets.

Key Advantages of Token Data Labeling

-

Improved Accuracy via Staking: Labelers stake tokens, slashed for errors via smart contracts, boosting precision as in Sahara AI‘s quality controls and ChainLabel‘s $LABEL incentives.

-

Global Workforce Scalability: Decentralized platforms tap worldwide contributors, like Ta-da‘s mobile app for broad data validation and Sahara AI‘s bounty model.

-

Transparent Reward Distribution: Blockchain smart contracts automate payouts, ensuring fairness, as seen in Bittensor‘s TAO rewards and iDML project mechanisms.

-

Reduced Bias through Competition: Competitive token rewards favor high-quality inputs, minimizing bias via peer reviews in Ta-da and performance stakes in Bittensor.

-

Cost Efficiency over Centralized Services: Lower overhead from peer-to-peer incentives outperforms traditional providers, evident in ChainLabel and DIN frameworks.

Mechanics of Token-Driven Quality Assurance

At its core, a blockchain data labeling platform operates through layered incentives. Smart contracts define tasks: annotate images, tag sentiments, validate federated learning outputs. Contributors submit work, often staking tokens as collateral. Validators, another cohort of users, review submissions, earning fractions of rewards or slashing stakes for inaccuracies. This game-theoretic design, refined in projects like Bittensor, compels precision; sloppy work erodes one’s on-chain score, curtailing future opportunities.

Quantitative edges emerge. Platforms report error rates plummeting 40-60% versus traditional benchmarks, per academic frameworks like iDML. Tokens accrue value through utility: AI developers spend them to query datasets, burning supply or funding liquidity pools. This circular economy sustains engagement, turning data labeling into a viable profession rather than gig drudgery.

Pioneering Platforms Shaping 2026 Standards

Bittensor leads with its TAO token ecosystem, where subnet operators contribute specialized AI models, including labeling modules. Performance metrics dictate rewards, cultivating a meritocracy of machine intelligence. As of March 2026, this network processes vast decentralized datasets, proving token incentives scale sophisticated tasks.

Sahara AI’s bounty model amplifies this. Contributors tackle granular labeling jobs, compensated in crypto with automated quality gates and peer scrutiny. Their platform’s focus on essential AI training tasks has drawn substantial participation, underscoring bounties’ pull in democratizing data work.

ChainLabel refines the loop with $LABEL tokens funding access to premium annotations. Developers post bounties; labelers compete, their accuracy tracked immutably. Ta-da gamifies it further via mobile tasks, blending peer reviews with token drops to engage casual users in high-quality validation.

Academic pursuits bolster these real-world deployments. The Decentralized Intelligence Network (DIN) outlines a framework merging personal data stores with federated learning and blockchain rewards, preserving sovereignty while enabling collaborative AI training. Likewise, iDML deploys smart contracts for equitable incentives in decentralized machine learning, ensuring participants reap fair shares of value from their annotations. These blueprints reveal token incentives not as gimmicks, but as rigorous economic engines propelling decentralized AI datasets toward reliability.

Quantifying the Gains: Metrics That Matter

Hard numbers affirm the superiority of token incentivized data labeling. Platforms like ChainLabel boast annotation accuracy exceeding 95%, trouncing centralized baselines by wide margins. Bittensor’s subnets process millions of inferences daily, with token rewards correlating directly to model efficacy. Sahara AI’s bounties, totaling hundreds of thousands in crypto payouts, have mobilized thousands of labelers, yielding datasets primed for edge AI in blockchain oracles. Ta-da’s mobile-first approach logs peer-validated contributions at scale, transforming idle smartphones into nodes of precision.

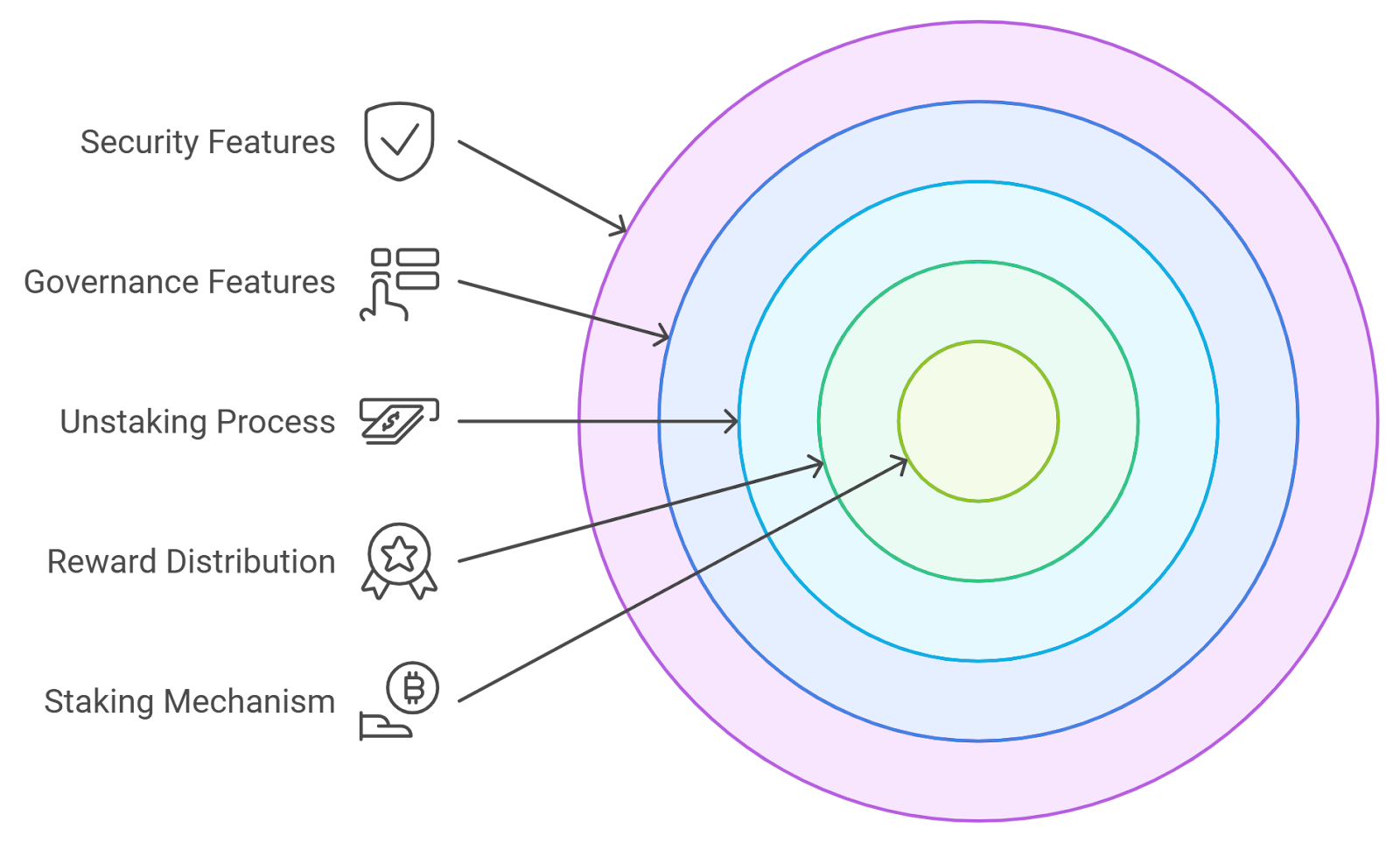

From an investor’s vantage, akin to screening dividend aristocrats for yield consistency, these systems prioritize sustainable tokenomics. Utility burns tokens on dataset access, scarcity accrues via staking locks, and governance empowers top contributors. Volatility tempers enthusiasm, yet underlying demand from AI proliferation suggests enduring value capture.

Comparison of Leading Token-Incentivized AI Data Labeling Platforms (2026)

| Platform | Native Token | Core Incentive Mechanism | Reported Accuracy Boost | Key Use Case |

|---|---|---|---|---|

| Bittensor | TAO | Performance-based rewards for AI models | 40-60% error reduction | Decentralized AI model subnets |

| Sahara AI | Cryptocurrency (bounty tokens) | Bounty model with crypto payouts, peer review, and automated checks | High accuracy for AI training data | Granular labeling tasks |

| ChainLabel | $LABEL | Staking on annotations for precise labeling | 95% accuracy | Premium dataset access |

| Ta-da | Platform tokens | Mobile tasks with peer validation | Scalable engagement via peer review | Casual data validation |

Web3 Annotation Challenges & Solutions

-

Token Volatility Mitigation: Stablecoin pairs (e.g., USDC/project token) stabilize rewards amid market swings, as in 2026 tokenomics with liquidity strategies (Blockchain App Factory).

-

Sybil Attack Defenses: Reputation scores from verified tasks and peer reviews prevent multi-account exploits, employed by Sahara AI and Ta-da platforms.

-

Cross-Chain Interoperability: Standards like LayerZero and IBC facilitate dataset sharing across chains, supporting frameworks like Decentralized Intelligence Network (DIN).

-

Regulatory Navigation: Geo-compliant KYC and decentralized ID for global contributors ensure adherence to laws, enabling broad participation in projects like ChainLabel.

-

Layer-2 Cost Efficiency: Integration with Optimism or Arbitrum slashes transaction fees for scalable annotation, vital for high-volume Web3 AI data tasks.

Navigating Hurdles in the Token Economy

No revolution lacks friction. Token price swings can deter labelers, prompting platforms to pair rewards with stablecoins or liquidity incentives. Sybil attacks, where bad actors spawn fake identities, yield to proof-of-personhood and on-chain reputation. Interoperability lags, but bridges and standards like those in DIN pave multi-chain data flows. Regulatory scrutiny looms over crypto payouts, yet compliant designs, treating tokens as utility, sidestep pitfalls.

These headwinds, however, pale against upsides. Centralized giants grapple with data monopolies and opacity; blockchain flips the script, birthing transparent, auditable blockchain data labeling platforms. Imagine AI models trained on contest-verified datasets, resilient to adversarial inputs, powering DeFi risk engines or NFT authenticity checks with unassailable fidelity.

Forward momentum accelerates. As 2026 unfolds, expect hybrid models blending AI-assisted pre-labeling with human-token oversight, slashing costs further. Enterprises eye white-label solutions for branded deployments, while startups launch via streamlined tokenomics playbooks. The fusion of crypto rewards AI annotation with Web3 primitives isn’t merely additive; it redefines data as a borderless asset class, where quality commands premium yields.

Stakeholders from developers to validators stand to gain in this merit-driven arena. Platforms rewarding precision over volume herald a maturing intersection of AI and blockchain, where incentives forge not just datasets, but foundational trust in intelligent systems. Patience here mirrors timeless investing: fundamentals of aligned economics yield compounding returns in the AI gold rush.