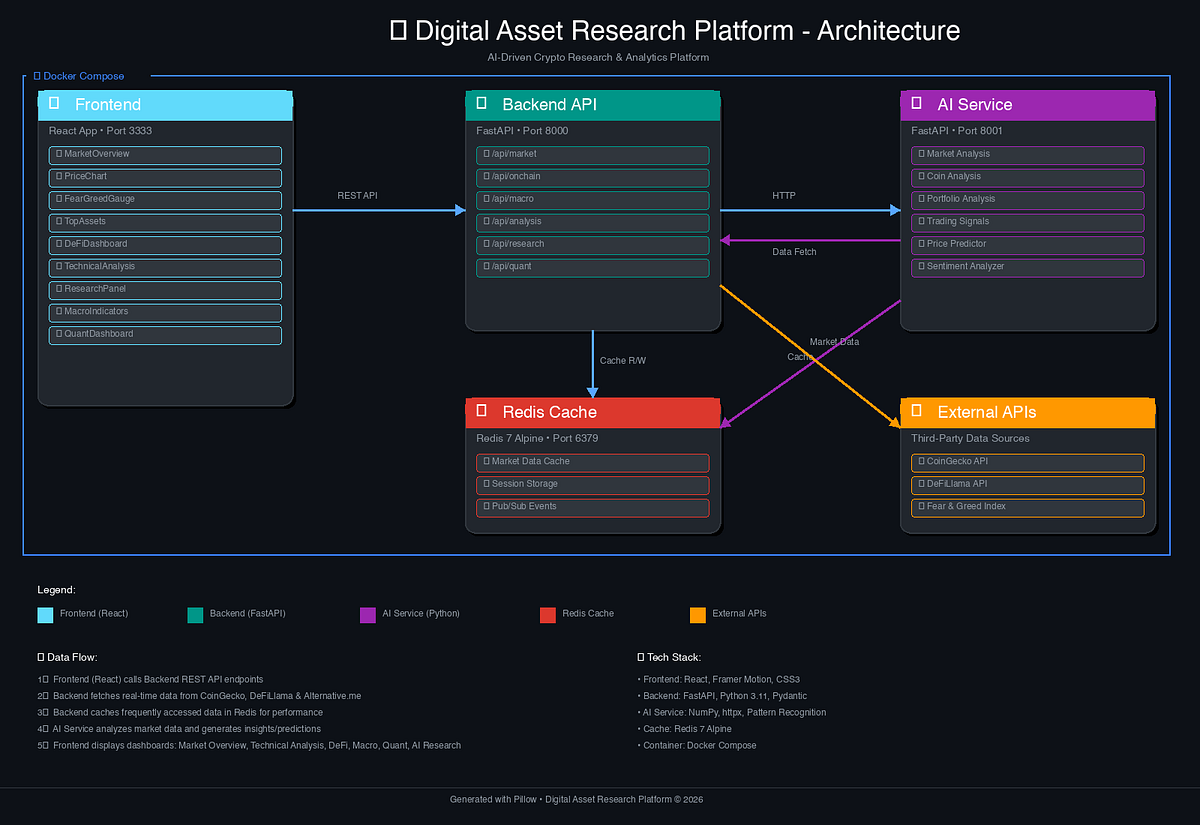

Token Rewards Driving High-Quality AI Data Annotation on Blockchain Platforms 2026

In the rapidly evolving intersection of artificial intelligence and blockchain technology, token rewards are emerging as a compelling mechanism to elevate the quality of data annotation. By 2026, platforms leveraging cryptocurrency incentives have transformed what was once a labor-intensive, error-prone process into a decentralized marketplace where contributors are directly motivated to deliver precise labels for images, text, and audio. This shift addresses longstanding pain points in AI development, where poor data quality undermines model performance and escalates costs. Yet, as a seasoned analyst, I approach this trend with measured optimism; the promise of blockchain AI data annotation hinges on robust tokenomics and verifiable outcomes, not mere hype.

Traditional data labeling relies on centralized crowdsourcing giants, often plagued by inconsistent quality, opaque payments, and exploitative wage structures. Contributors, typically underpaid gig workers, have little skin in the game, leading to rushed or inaccurate annotations that propagate biases into AI systems. Blockchain platforms disrupt this by introducing crypto rewards for data annotators, tying compensation to performance through smart contracts. Payments become transparent and instantaneous, fostering accountability. However, skeptics rightly question whether these tokens truly decentralize AI or merely repackage centralized control under a crypto veneer, as highlighted in recent academic reviews.

On-Chain Reputation Systems: The Backbone of Quality Control

At the core of these platforms lies sophisticated on-chain reputation AI datasets mechanisms. Labelers build verifiable scores based on task accuracy, peer validations, and automated checks, stored immutably on the blockchain. This creates a meritocratic ecosystem where high performers earn premium rewards, while low-quality inputs are filtered out. For instance, reputation can influence task assignments or staking requirements, ensuring only trusted annotators handle sensitive datasets. From an investment perspective, this gamifies diligence but demands scrutiny of sybil resistance; fake accounts could inflate scores if governance falters.

Core On-Chain Reputation Features

-

Peer reviews with token bounties: Labelers assess peers’ annotations, earning tokens for constructive feedback to foster accountability, as implemented in Sahara AI.

-

Automated quality audits via AI validators: AI tools cross-verify submissions using models like normal distribution or swarm intelligence, filtering outliers cautiously, exemplified by Alaya AI.

-

Dynamic scoring tied to task complexity: Reputation adjusts based on task difficulty and performance, with rewards scaled proportionally, as in Bittensor‘s value-based incentives.

-

Staking penalties for disputes: Contributors stake tokens, facing slashes in conflicts to deter malice, supporting sustainable engagement like in OanicAI‘s system.

Such systems not only incentivize precision but also scale globally, drawing diverse contributors from emerging markets where traditional platforms overlook talent. Still, macroeconomic headwinds like token volatility pose risks; a bear market could erode motivators, reverting to low-effort participation.

Pioneering Platforms Leading the Charge in 2026

Sahara AI’s Data Services Platform, launched mid-2025, exemplifies this evolution with over $450,000 in token bounties for tasks like image labeling and text evaluation. Contributors earn $SAHARA tokens, USDC, or partner assets, backed by peer reviews and reputation scoring. Alaya AI adds gamification flair, using normal distribution models to weed out outliers and swarm intelligence for consensus, rewarding users with AIA tokens redeemable for NFTs and perks. These innovations make annotation engaging, yet I caution that gamified elements risk superficial participation if core utilities weaken.

Tokenomics Under the Hood: Balancing Incentives and Sustainability

Diving deeper, decentralized data labeling platforms employ varied economic models to sustain rewards. Tagger’s AI Copilot tool streamlines annotations across data types, paying in $TAG via smart contracts that enforce transparency and data ownership. Bittensor, a veteran since 2021, extends this to machine intelligence markets, where models compete for TAO tokens based on collective value added. OanicAI rounds out the field with reputation-driven staking and governance, powering a marketplace for verified datasets. Analytically, these structures promote long-term alignment, but token dilution from emissions warrants vigilant due diligence. Inflationary pressures could undermine value accrual, echoing pitfalls in broader crypto narratives. Platforms succeeding here prioritize deflationary mechanics and real utility burn, ensuring rewards reflect genuine contributions rather than speculative froth.

Evaluating these platforms through a fundamental lens reveals stark differences in execution. While Sahara AI and Alaya AI prioritize user-friendly task interfaces with broad reward options, Bittensor’s protocol-level incentives target sophisticated ML contributors, fostering deeper network effects. Tagger and OanicAI emphasize data marketplaces, where annotations translate into tradable assets, potentially unlocking secondary revenue streams. This diversity strengthens the ecosystem but amplifies selection risks for investors; not all will navigate token unlocks or adoption hurdles unscathed.

Comparison of Leading Token-Incentivized Data Labeling Platforms (2026) 📊

| Platform | Native Token | Key Features (reputation, validation methods) | Reward Types | Blockchain Base |

|---|---|---|---|---|

| Sahara AI 🚀 | $SAHARA | Automated checks ✅, peer reviews 👥, reputation scoring 🏅 | Cryptocurrency for tasks ($SAHARA, USDC, partner tokens) 💰 | Blockchain-based |

| Alaya AI 🎮 | AIA | Normal distribution 📈, swarm intelligence 🐝, cross-referencing 🔗 | AIA tokens (NFT upgrades, events, achievements) 🏆 | Blockchain-based |

| Tagger 🏷️ | $TAG | AI Copilot 📱, smart contracts 🔒, transparent public results | Completion rewards 💵 | Blockchain (Smart Contracts) |

| Bittensor 🧠 | TAO | Peer-to-peer market 🤝, informational value scoring 📊, contribution reputation | TAO proportional to value 🪙 | Bittensor Network |

| OanicAI 🔍 | Proprietary Token | Reputation-based rewards 🏅, verified datasets ✅ | Labeling/verification, staking, governance 🔒🪙 | Blockchain-based |

From a macroeconomic vantage, token incentivized data labeling aligns with surging AI compute demands, projected to consume 10% of global electricity by decade’s end. Blockchain’s transparency counters data silos, enabling collaborative datasets immune to single-point failures. Yet, I remain guarded against over-optimism. Volatility in crypto markets can slash real-world rewards; a 50% token drawdown might deter contributors, reverting quality gains. Moreover, regulatory scrutiny looms over unverified datasets used in high-stakes applications like autonomous vehicles or medical diagnostics. Platforms must embed compliance early, or face delisting risks akin to past ICO casualties.

Challenges Ahead: Navigating Risks in a Maturing Ecosystem

Sybil attacks persist as a thorn, despite staking mechanisms; sophisticated bots could game reputation scores, diluting pool integrity. Academic critiques, such as those probing AI-based crypto tokens, underscore this: many projects masquerade decentralization while validators consolidate power. Peer-reviewed studies on blockchain incentives for content creation echo similar pitfalls in non-AI domains, where initial hype yields to coordination failures. Prudent platforms counter with multi-signature approvals and zero-knowledge proofs for privacy-preserving audits, but implementation lags in nascent networks.

Scalability bottlenecks further test resolve. Solana-based Synesis One, for example, rewards AI training contributions but grapples with congestion during peak bounties. Ethereum Layer-2 solutions offer relief, yet gas fees erode micro-rewards for low-volume annotators. Investors should prioritize platforms with adaptive fee structures and cross-chain bridges, ensuring crypto rewards for data annotators remain viable amid network flux.

Beyond technical hurdles, human factors shape outcomes. Global workforce dynamics favor blockchain’s borderless access, empowering annotators in high-cost regions to compete via premium tasks. Still, cultural biases in labeling persist; diverse reputation-weighted consensus is essential to mitigate them. My experience in asset management underscores that sustainable models hinge on recurring utility, not one-off airdrops. Platforms burning tokens on task completions or governance votes build deflationary moats, rewarding early adherents over speculators.

Investment Implications: Prudence in a High-Potential Space

For portfolios eyeing the AI-blockchain nexus, allocate conservatively to proven leaders like Bittensor, whose TAO has demonstrated resilience through cycles. Diversify across ecosystems: Solana for speed, Ethereum for security. Monitor on-chain metrics, active labelers, bounty fulfillment rates, token velocity, as leading indicators of health. Avoid FOMO-driven entries; wait for audited tokenomics and partnerships with AI incumbents. Wealth accrues to those dissecting whitepapers against real throughput, not chasing narrative pumps.

This token-driven paradigm, when executed with discipline, could redefine AI foundations, yielding datasets rivaling proprietary giants. Platforms like Perle Labs and OpenLedger, though earlier entrants, preview specialized niches in model incentives. As adoption scales, expect integrations with Web3 wallets and DAOs, amplifying network effects. Yet, true decentralization demands vigilant governance; without it, we risk illusory progress. Patience, paired with rigorous analysis, positions savvy participants to capture enduring value in decentralized data labeling platforms.