Blockchain Platforms Using Tokens to Scale Global AI Data Annotation Workforces

The demand for vast, accurate datasets to train advanced AI models has exploded, but centralized labeling services struggle with scalability, bias, and escalating costs. Blockchain platforms are flipping the script by deploying tokens to rally a global army of annotators, creating decentralized blockchain AI annotation workforces that deliver unbiased, high-fidelity data at fraction of the price. This fusion of crypto incentives and crowdsourced expertise isn’t just efficient; it’s a fundamental shift toward democratizing AI development, where everyday contributors worldwide earn crypto rewards data annotation for tasks like tagging images or transcribing audio.

These systems leverage smart contracts to automate payments, on-chain reputation scores to filter quality, and economic models that reward precision over volume. The result? AI projects access petabytes of labeled data without the headaches of managing in-house teams or outsourcing to opaque vendors. From startups to enterprises, the appeal is clear: faster iteration cycles and models that generalize better across diverse real-world scenarios.

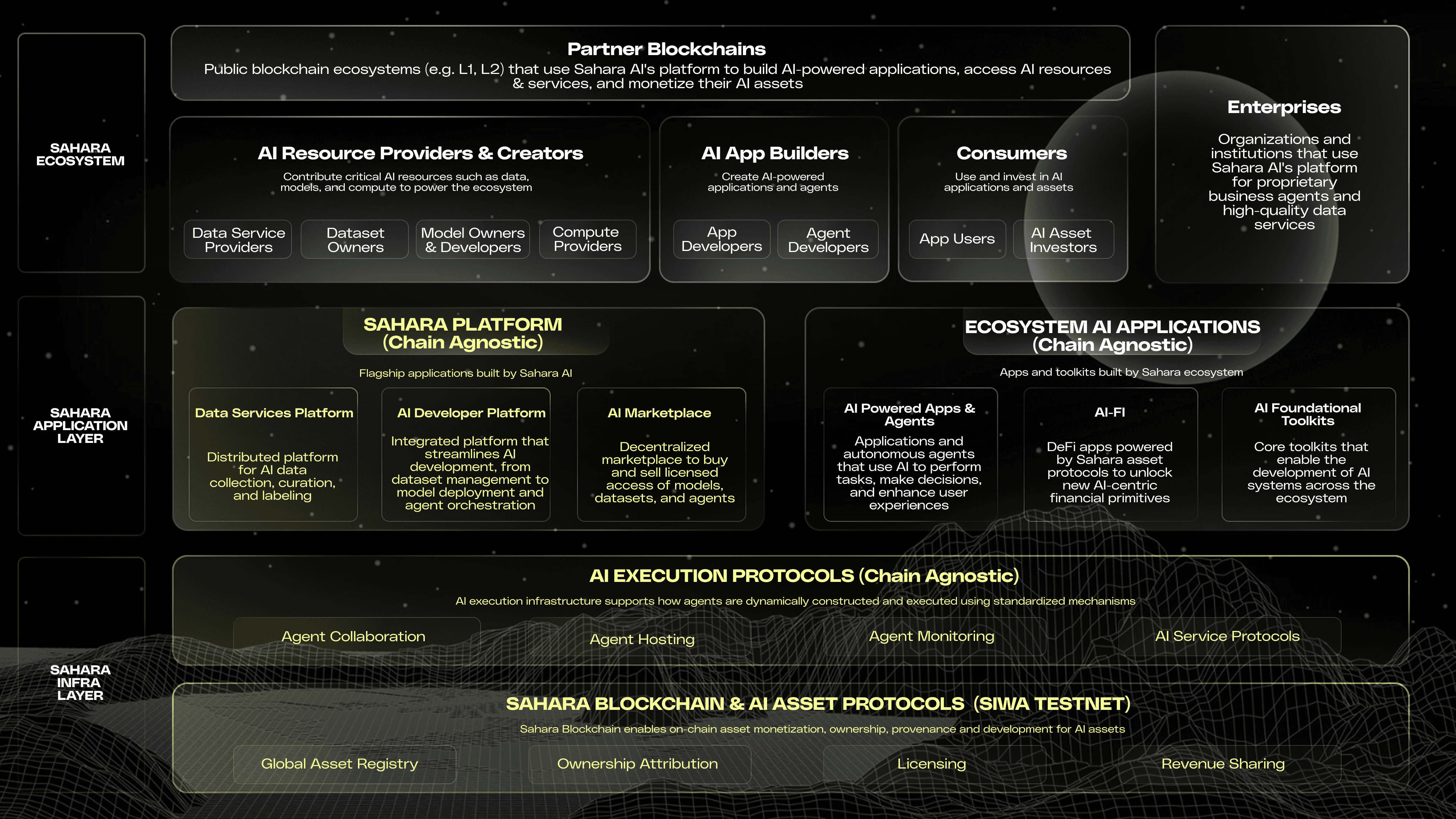

Sahara AI Leads with Bountied Data Services

Sahara AI’s Data Services Platform, rolled out in July 2025, exemplifies this trend by paying users in SAHARA tokens for microtasks such as image labeling and audio transcription. Backed by a hefty $43 million funding round from August 2024, the platform integrates automatic quality checks alongside reputation systems to weed out sloppy work. Contributors stake tokens on their submissions, facing slashes for inaccuracies, which aligns incentives toward excellence. This gamified approach has already drawn thousands, proving that token-incentivized global data labeling can outpace legacy methods in speed and diversity.

What sets Sahara apart is its focus on verifiable outputs. Every annotation gets timestamped on-chain, creating an audit trail that AI developers trust implicitly. In a field plagued by fabricated labels, this transparency builds confidence, allowing seamless integration into training pipelines for computer vision and natural language models alike.

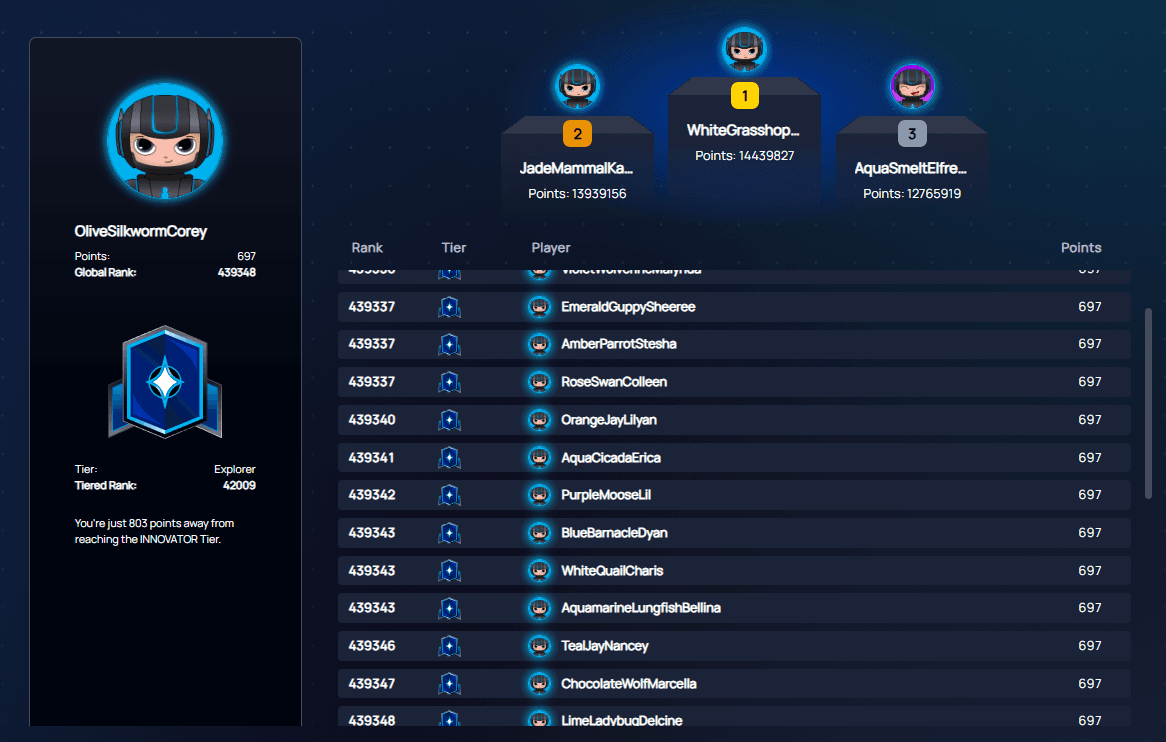

Sapien’s Proof of Quality Scales to Millions

Sapien takes crowdsourcing to another level, boasting over 1.8 million contributors from 110-plus countries as of December 2025. Workers earn SAPIEN tokens via microtasks like image tagging and text validation, but the magic lies in its Proof of Quality consensus mechanism. Without a central authority, participants vote on label accuracy, with token-weighted stakes ensuring honest consensus. Low performers lose rewards; top ones climb reputation ladders, unlocking premium gigs.

This peer-reviewed system mimics open-source software’s meritocracy, fostering a self-sustaining ecosystem where quality begets more opportunities. Sapien’s reach across geographies injects cultural nuance into datasets, reducing the Western bias that hampers many AI systems. For developers, it means robust, multicultural data ready for deployment in global applications, from autonomous vehicles to personalized medicine.

Top Platforms’ Key Features

-

Sahara AI: Data Services Platform uses SAHARA tokens for payments on tasks like image labeling; quality via automatic checks and reputation systems.

-

Sapien: Proof of Quality peer consensus mechanism with SAPIEN tokens rewards for 1.8M+ global contributors on microtasks.

-

Unbiased: On-chain reputation on Telos Blockchain Data Marketplace, plus AI spam detection for transparent annotation.

-

Bittensor: Decentralized ML network rewards TAO tokens based on informational value from collaborative model training.

-

Virtuals Protocol: Tokenized AI agents enable co-ownership, governance, and revenue sharing for contributors.

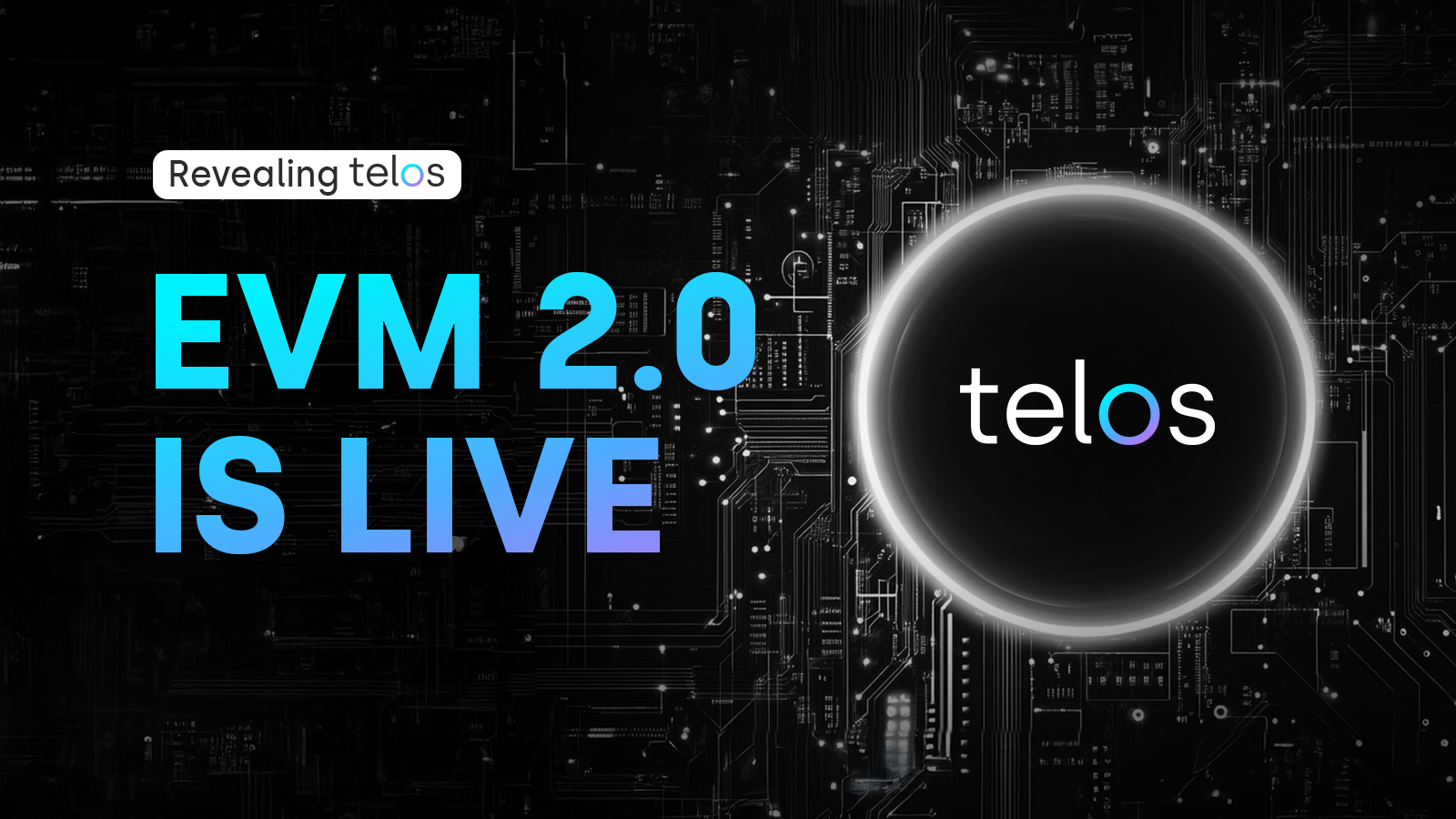

Unbiased and the Telos-Powered Data Marketplace

Built on the Telos Blockchain, Unbiased’s Data Marketplace prioritizes transparency by etching worker reputations directly on-chain. Annotators tackle diverse tasks while AI-driven spam detectors flag anomalies in real-time. Tokens flow to those delivering clean, consistent labels, creating a merit-based marketplace where buyers specify exact requirements, from sentiment analysis to object detection. This setup not only scales effortlessly but also mitigates fraud, a persistent thorn in decentralized annotation.

The platform’s strength shines in its integration of human and machine checks, blending crowd wisdom with algorithmic oversight. As AI edges toward autonomy, Unbiased ensures the foundational data remains tamper-proof and representative, empowering builders to focus on innovation rather than verification drudgery.

While Unbiased excels in marketplace dynamics, platforms like Bittensor push boundaries by turning machine intelligence itself into a tokenized commodity. This evolution signals a deeper integration where data annotation feeds directly into collaborative model training, amplifying the value chain.

Bittensor: Tokenizing Collective Intelligence

Founded in 2021, Bittensor reimagines AI development as a decentralized neural network where participants contribute computational resources and data insights to train models collectively. Miners earn TAO tokens proportional to the informational value their contributions add, measured through sophisticated peer validation protocols. Unlike traditional annotation silos, Bittensor blurs lines between labeling, inference, and optimization, creating a self-improving ecosystem that scales with network participation.

Critics might dismiss it as overly abstract, but Bittensor’s proof-of-intelligence mechanism weeds out noise, rewarding substantive advancements in areas like natural language understanding or predictive analytics. For AI builders weary of static datasets, this dynamic flow of fresh, vetted annotations means models that adapt in real-time, outpacing centralized labs in agility and cost-efficiency. It’s a bold bet on open intelligence markets, one that’s already commoditizing smarts in ways that echo stock exchanges for code.

Virtuals Protocol: AI Agents as Revenue Generators

Launched in March 2024, Virtuals Protocol flips the script by tokenizing AI agents themselves, enabling co-ownership among creators, annotators, and users. Contributors label interaction data or fine-tune behaviors to enhance agent performance in virtual worlds, entertainment, or customer service, earning shares of generated revenue through protocol tokens. This model extends crypto rewards data annotation beyond raw labeling to iterative agent evolution, where quality inputs directly boost commercial viability.

Governance tokens let stakeholders vote on development roadmaps, ensuring annotations align with market demands. In practice, it fosters specialized workforces for niche domains, like gaming NPCs or personalized chatbots, delivering hyper-targeted datasets that generic platforms can’t match. Virtuals embodies the creative potential of token-incentivized global data labeling, where global talent co-builds profitable AI entities rather than mere data points.

Comparison of Blockchain Platforms Using Tokens for AI Data Annotation

| Platform | Token | Key Features | Scale & Incentives | Quality Mechanism |

|---|---|---|---|---|

| Sahara AI | SAHARA | Data Services Platform (DSP), bounties for image labeling & audio transcription | Launched July 2025, $43M funding (Aug 2024), $450K+ token bounties | Quality staking, automatic checks, reputation systems |

| Sapien | SAPIEN | Microtasks like image tagging & text validation | 1.8M+ contributors across 110+ countries | Proof of Quality consensus, peer consensus |

| Bittensor | TAO | Decentralized ML network, collaborative model training | Founded 2021, commoditizes machine intelligence | Rewards based on informational value |

| Virtuals Protocol | Agent Tokens | Tokenized AI agents for revenue generation & co-ownership | Launched March 2024, revenue share model | Governance & revenue sharing incentives |

| Unbiased | Telos | Data Marketplace on Telos Blockchain | Global transparency in annotation | On-chain reputation, AI-based spam recognition |

Beyond these leaders, emerging players like PublicAI scale multi-modal annotation for text, audio, video, and mapping, while Timeworx. io redefines workflows in decentralized setups. Each reinforces a core truth: token economics solve the annotation trilemma of scale, quality, and bias. By staking reputation and rewards on accuracy, these blockchain AI annotation workforces create virtuous cycles, where better data begets sharper models, attracting more contributors in a global flywheel.

The economic logic is compelling. Traditional labeling costs $0.10-$2 per image; blockchain alternatives slash that by 70% through automation and competition, per industry benchmarks. Yet savings pale against qualitative leaps: diverse annotators from 100 and countries infuse datasets with underrepresented perspectives, curbing AI’s echo-chamber pitfalls. Investors take note; with AI capex projected to hit trillions, platforms capturing this data layer stand to dominate.

Challenges persist, from oracle dependencies for off-chain verification to token volatility testing sustained participation. Still, iterative upgrades, like Bittensor’s subnet innovations or Sapien’s consensus tweaks, show resilience. As regulatory clarity emerges, expect explosive growth, cementing tokens as the lifeblood of scalable AI foundations. This isn’t hype; it’s the infrastructure upgrade AI has craved, powered by blockchain’s unyielding incentives.