In the pulsating nexus of artificial intelligence and blockchain, a quiet revolution brews around smart contract data labeling. AI blockchain projects crave precise annotations to train models that audit, optimize, and secure smart contracts, yet traditional labeling falls short on scale, trust, and motivation. Enter token rewards: cryptocurrency incentives that turn global contributors into vigilant annotators, fueling decentralized ecosystems with verified data. This isn't mere speculation; it's a pragmatic shift, as seen in platforms like ChainLabel where $LABEL tokens directly reward accurate labeling, echoing broader trends from Sahara AI's vision of blockchain decentralizing data collection.

Unlocking Precision Through Token Incentives

Smart contracts, those self-executing codes on blockchains like Ethereum, demand meticulous annotation for AI to grasp vulnerabilities, gas efficiencies, or compliance quirks. Manual labeling by experts is bottlenecked by cost and bias; crowdsourcing dilutes quality. Token incentives blockchain AI flips this script. Contributors stake tokens or earn them based on annotation accuracy, verified on-chain. Sahara AI highlights how decentralization sidesteps central chokepoints, while ChainLabel's model proves it: log in, label smart contract data accurately, pocket $LABEL. This aligns incentives razor-sharp, as Sandeep Chinchali notes in tokenomics designs that sustain growth via balanced economies.

Consider the stakes. Poor annotations breed flawed AI models, amplifying smart contract exploits that drained billions in 2024 alone. Token rewards enforce skin-in-the-game; low-quality work forfeits payouts, fostering a meritocracy. Research from IIT Kharagpur underscores blockchain's role in secure data sharing for AI training, while Frontiers journal praises crypto tokens for transparent dataset funding.

Key Token Reward Benefits

- Drives Participation: Tokens incentivize users to annotate smart contracts, building large datasets. Example: ChainLabel ($LABEL) rewards accurate labelers directly.

- Ensures Quality: Smart contracts automate evaluation, rewarding only superior contributions. Example: Lennard Jones Token pays for optimized particle structures beating database energies.

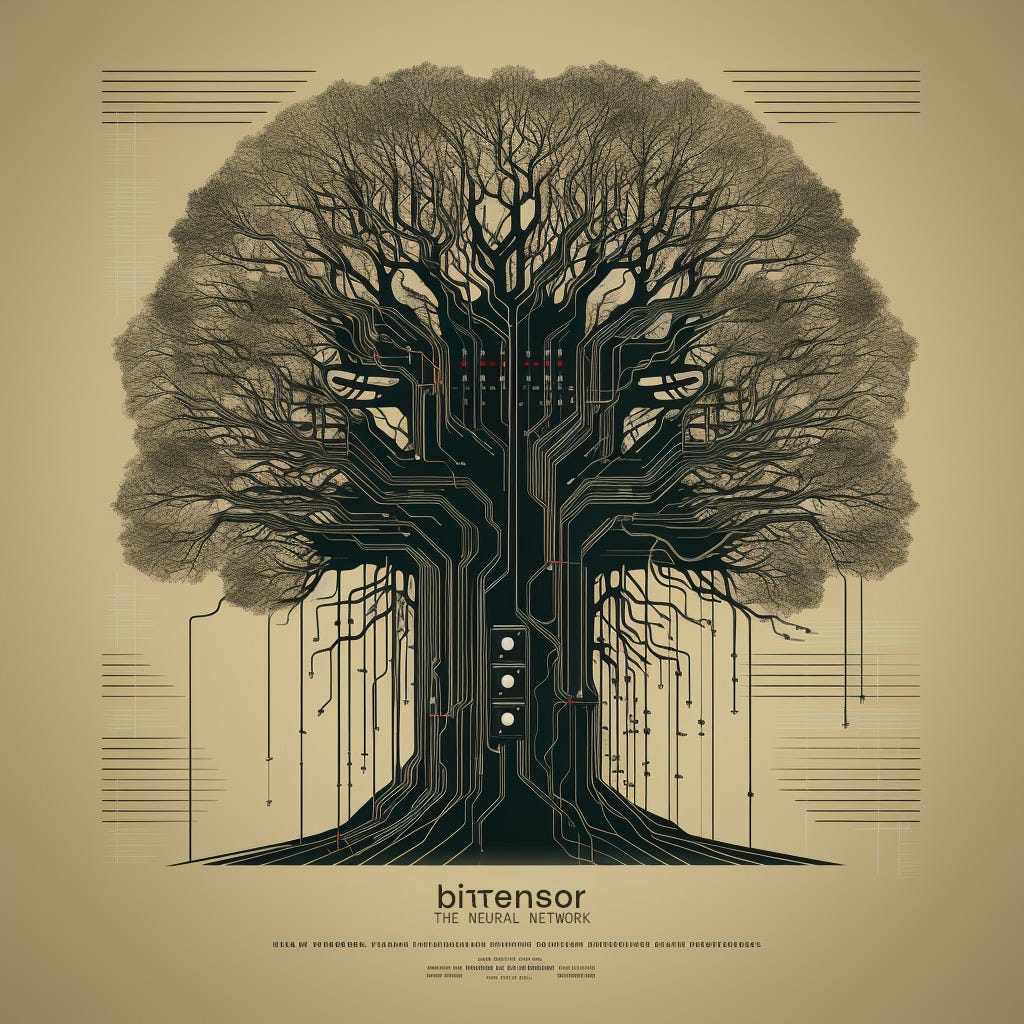

- Builds AI Marketplaces: Creates decentralized networks for AI models with performance-based rewards. Example: Bittensor (TAO) distributes tokens to top-performing model providers.

- Enables On-Chain AI: Integrates AI into smart contracts, rewarding model uploads and usage. Example: Cortex (CTXC) lets contributors earn for blockchain AI services.

- Monetizes AI Services: Powers transactions in decentralized AI ecosystems via tokens. Example: SingularityNET (AGIX) facilitates AI service marketplace rewards.

Pioneering Projects Leading the Charge

Real-world deployments illuminate the path. Bittensor's TAO tokens reward AI model contributions in a peer-evaluated network, extending naturally to smart contract enhancements. Cortex integrates AI directly into smart contracts, compensating developers with CTXC for model uploads that refine on-chain logic. SingularityNet's AGIX powers a marketplace where AI services, including annotation tools, monetize via tokens. These aren't outliers; Perle Labs builds human-verified datasets with on-chain rewards, and Lennard Jones Token automates evaluations for optimized structures, rewarding only superior submissions via smart contracts.

ChainUp's top AI crypto tokens for 2025 spotlight such innovations, blending use cases with risks like token volatility. Yet, the arXiv review tempers hype, scrutinizing architectures where token utility drives genuine decentralization over illusion.

Comparison of AI Blockchain Projects: Token Rewards for Smart Contract Annotation

| Project | Token | Rewards Mechanism | Smart Contract Focus |

|---|---|---|---|

| Bittensor | TAO | Participants provide AI models to the network, evaluated on performance and rewarded with TAO tokens. | Global marketplace for AI models merging blockchain and AI 🤖🔗 |

| Cortex | CTXC | Contributors earn CTXC tokens for uploading and running AI models directly on the blockchain. | AI model integration into smart contracts 💻🔗 |

| SingularityNet | AGIX | Users monetize AI services in a decentralized marketplace with AGIX token transactions. | Decentralized AI marketplace leveraging blockchain 🛒🤖 |

| ChainLabel | $LABEL | Labelers earn $LABEL tokens for accurately labeling data on the platform. | Decentralized AI data labeling for smart contracts 📝🔍 |

Mechanics of On-Chain Annotation Rewards

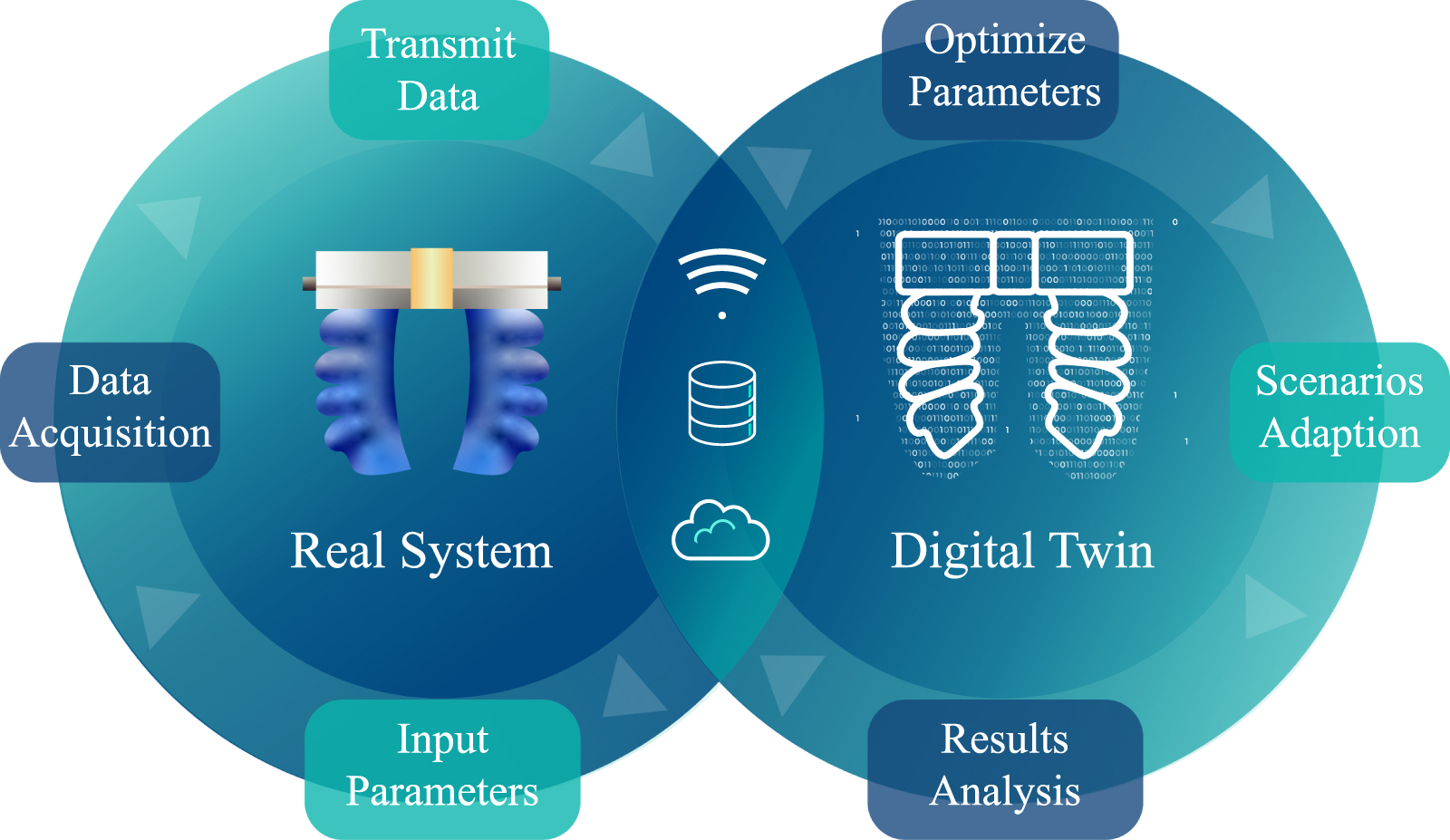

Diving deeper, AI crypto data annotation thrives on verifiable protocols. A typical flow: users access unlabeled smart contracts via dApps, annotate with tags on functions, risks, or optimizations. Smart contracts oracle the data against benchmarks or peer reviews, disbursing tokens proportionally. Galaxy. com details how projects like Nous and Prime Intellect extend this to decentralized AI training, crypto greasing the wheels.

This on-chain contract labeling rewards model scales infinitely, tapping global talent pools. Tokenomics ensure longevity; emissions taper as network value accrues, per Medium insights. Contributors build reputation scores, unlocking premium bounties, much like CryptoRank's Perle Labs emphasizing verified datasets. The result? AI models attuned to blockchain nuances, spotting subtle reentrancy bugs or inefficient loops that centralized labeling misses.

Critically, this democratizes expertise. No longer gatekept by Silicon Valley firms, annotation becomes a borderless hustle, rewarding nuance in Solidity or Rust code. OneKey defines AI tokens as gateways to such protocols, blending access with incentives.

But token-driven annotation isn't without friction. Volatility in AI tokens can deter steady participation, as seen in critiques from arXiv's dissection of projects where speculative hype overshadows utility. Contributors face sybil attacks, where bad actors flood low-quality labels to game rewards. Smart protocols counter this with slashing mechanisms, reputation-weighted voting, and zero-knowledge proofs for privacy-preserving verification. Frontiers' cryptographic open science framework bolsters this, ensuring datasets remain tamper-proof while rewarding authenticity.

Navigating Risks in Token-Driven Ecosystems

Opinionated take: pure token incentives risk token incentives blockchain AI devolving into pump-and-dump schemes if governance lags. ChainUp's 2025 rankings warn of regulatory headwinds and market saturation, yet sustainable models like those in Perle Labs prioritize reputation over raw emissions. Projects must bake in deflationary burns tied to annotation volume, creating scarcity that mirrors data's rising value. Galaxy's spotlight on Nous and Templar shows crypto powering open AI training, but only if oracles reliably benchmark smart contract annotations against real exploits.

Pitfalls & Mitigations in Rewards

- 1. Sybil Attacks: Users create multiple fake identities to farm tokens. Mitigation: Reputation systems and staking, as used by Perle Labs for verified datasets.

- 2. Low-Quality Labels: Incentives lead to spam or inaccurate annotations. Mitigation: Performance-based evaluation, like Bittensor (TAO) rewards for high-performing AI models.

- 3. Verification Challenges: Difficult to objectively verify labels on-chain. Mitigation: Automated smart contract evaluation, e.g., Lennard Jones Token only rewards superior structures.

- 4. Scalability Costs: High gas fees for on-chain submissions. Mitigation: Efficient model integration, as in Cortex (CTXC) for AI in smart contracts.

- 5. Incentive Misalignment: Short-term farming over long-term quality. Mitigation: Marketplace dynamics and accurate labeling rewards, per ChainLabel ($LABEL).

Scalability hinges on layer-2 solutions. Ethereum's gas fees could choke micro-rewards, but Polygon or Optimism integrations, as hinted in Cortex's architecture, keep costs negligible. Contributors earn not just tokens, but NFTs certifying expertise, tradeable on secondary markets. This layers utility, turning annotators into stakeholders whose insights compound network security.

Tokenomics That Endure

At the heart lies tokenomics craftsmanship. Sandeep Chinchali's blueprint demands velocity controls: high initial rewards bootstrap liquidity, then veer toward staking yields for long-haul labelers. Imagine $LABEL's supply curve: 40% to annotators, vested over epochs based on accuracy scores. Bittensor executes this masterfully, TAO holders voting on subnet allocations for smart contract niches. SingularityNet's AGIX adds marketplace fees recycled into bounties, fostering self-sustaining loops.

Tokenomics Breakdown for Top Projects: Rewards, Vesting, and Deflation

| Project (Token) | Allocation to Rewards 💰 | Vesting Schedules ⏳ | Deflation Mechanisms 🔥 |

|---|---|---|---|

| Bittensor (TAO) | 60% emissions to miners/validators for AI models 🧠 | Immediate for rewards; no vesting on emissions | Halving every ~4 years (21M cap) ✂️ |

| SingularityNet (AGIX) | 25% ecosystem fund for AI service providers 🤖 | Team: 4-year linear vesting | Transaction fee burns 🔥 |

| Cortex (CTXC) | 50% to AI model contributors and miners | Team/private: 3-year vesting | Mining reward halvings ⛏️ |

| ChainLabel ($LABEL) | 50% to smart contract labelers 📝 | Immediate rewards for accurate annotations | Buyback & burn on low-quality penalties 💸🔥 |

Research Matters from IIT Kharagpur validates the tech: blockchain secures data shares for collaborative AI, tokens aligning contributors without trust assumptions. Lennard Jones exemplifies automation; only energy-minimizing structures beat the database, payouts instant via smart contracts. This rigor elevates AI crypto data annotation from grunt work to intellectual arbitrage.

Zoom out to macro implications. As AI blockchain projects proliferate, annotated smart contracts become public goods, slashing exploit costs that topped $3 billion last cycle. Enterprises tap this for compliance audits, startups for rapid prototyping. Contributors, from Lagos coders to Seoul quants, claim slices of a trillion-dollar pie. OneKey nails it: AI tokens aren't gimmicks; they're the fuel for protocol-native intelligence.

The fusion accelerates. Platforms evolve toward hybrid human-AI labeling, where initial tokens seed models that auto-annotate, humans refining edges for premium pay. ChainLabel's dApp hints at this, blending crowds with oracles. Risks persist, volatility ebbs and flows, but the meritocratic core endures. In a world of opaque data pipelines, smart contract data labeling via tokens carves transparency, empowering AI to safeguard blockchain's promise: code as law, verified by the crowd.

No comments yet. Be the first to share your thoughts!