In the surging intersection of blockchain and artificial intelligence, token incentives are reshaping how we collect and label data for crypto analytics models. Traditional centralized platforms often struggle with scalability, quality control, and contributor motivation, leading to datasets riddled with errors that undermine AI performance. Enter decentralized protocols like Sahara AI and Alaya AI, where token incentivized data labeling flips the script by aligning economic rewards directly with annotation accuracy. This model not only democratizes access to high-quality data but also scales contributions globally through cryptocurrency bounties, fostering datasets robust enough for advanced machine learning in decentralized finance and beyond.

Decentralized Platforms Pioneering Token-Driven Annotation

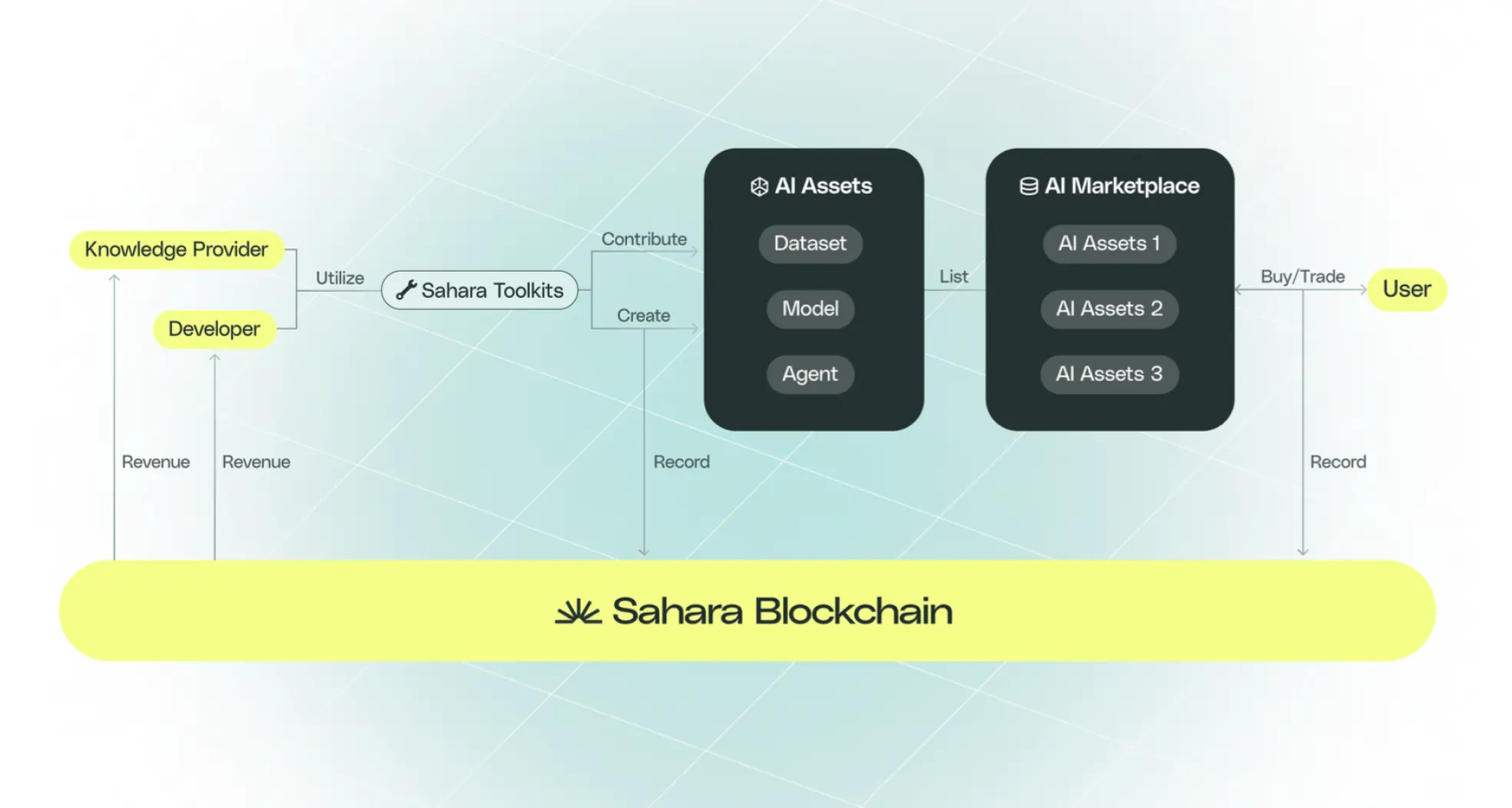

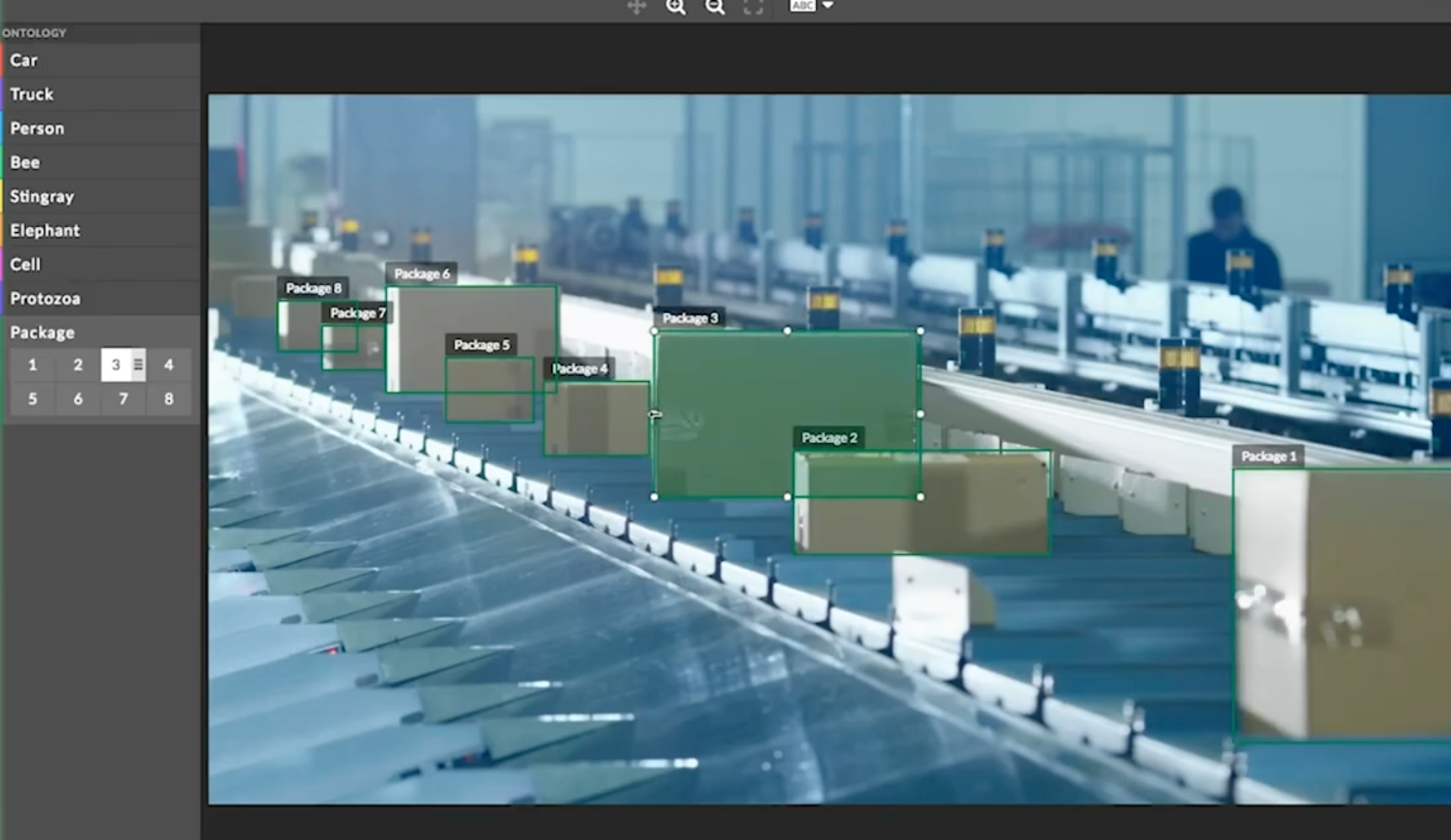

Projects such as PublicAI and Sapien exemplify this evolution. PublicAI boasts the world's largest decentralized platform for multi-modal data, encompassing text, audio, video, and mapping annotations. Contributors earn tokens for their efforts, creating a self-sustaining ecosystem where volume meets precision. Sapien, meanwhile, positions itself as a decentralized data foundry, converting human knowledge into enterprise-grade training sets via blockchain transparency and crypto AI data annotation rewards. Data from recent launches, like Sahara AI's platform offering over $450K in token bounties, underscores the momentum: decentralized approaches are attracting massive participation by tying payouts to verifiable quality.

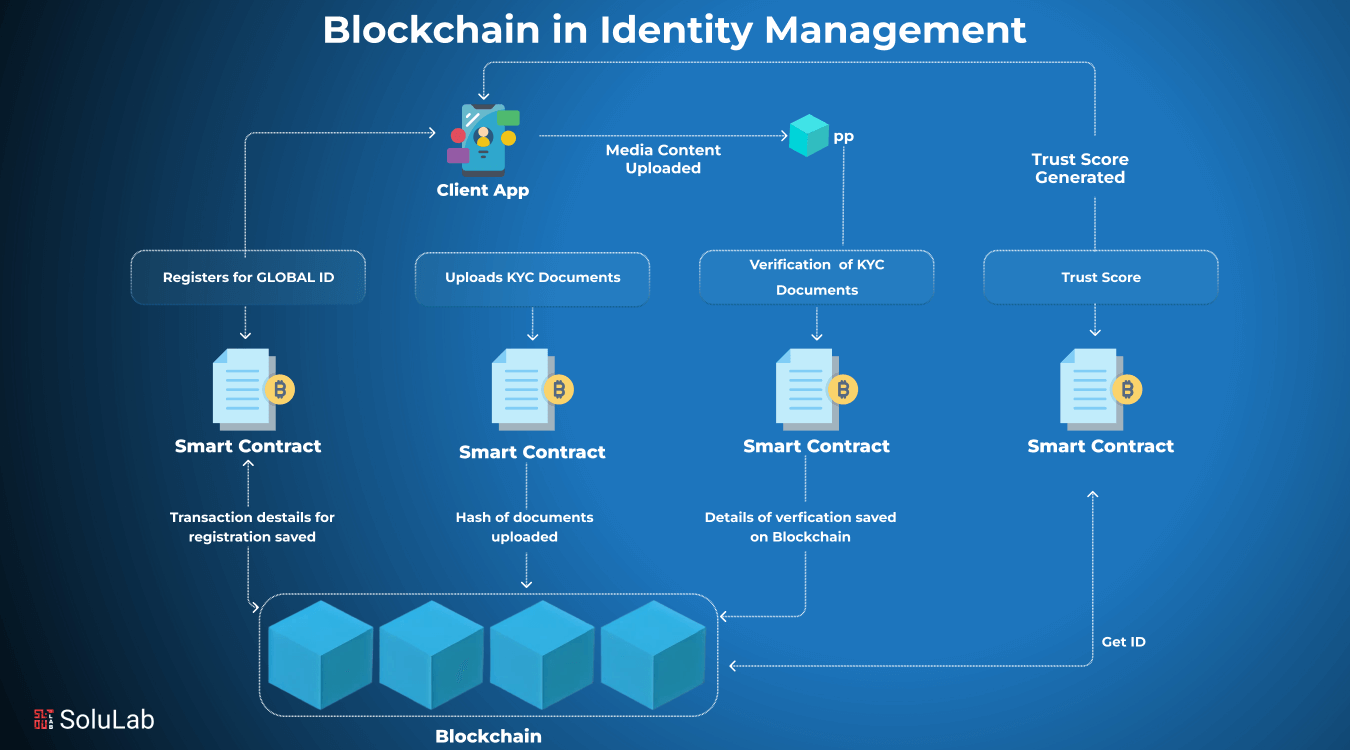

These platforms address core pain points in AI training. Centralized labeling services charge premiums and bottleneck supply, while open-source efforts suffer from inconsistent quality. Blockchain changes that equation with on-chain verification, ensuring annotations are tamper-proof and rewarded proportionally. ChainLabel, for instance, deploys $LABEL tokens where higher accuracy yields bigger payouts, a mechanism that empirical tests show boosts reliability by up to 30% over fiat-based alternatives.

Key Token-Incentivized Data Labeling Projects

- Sahara AI: Decentralized platform offering $450K+ in crypto bounties for accurate AI training data labeling.

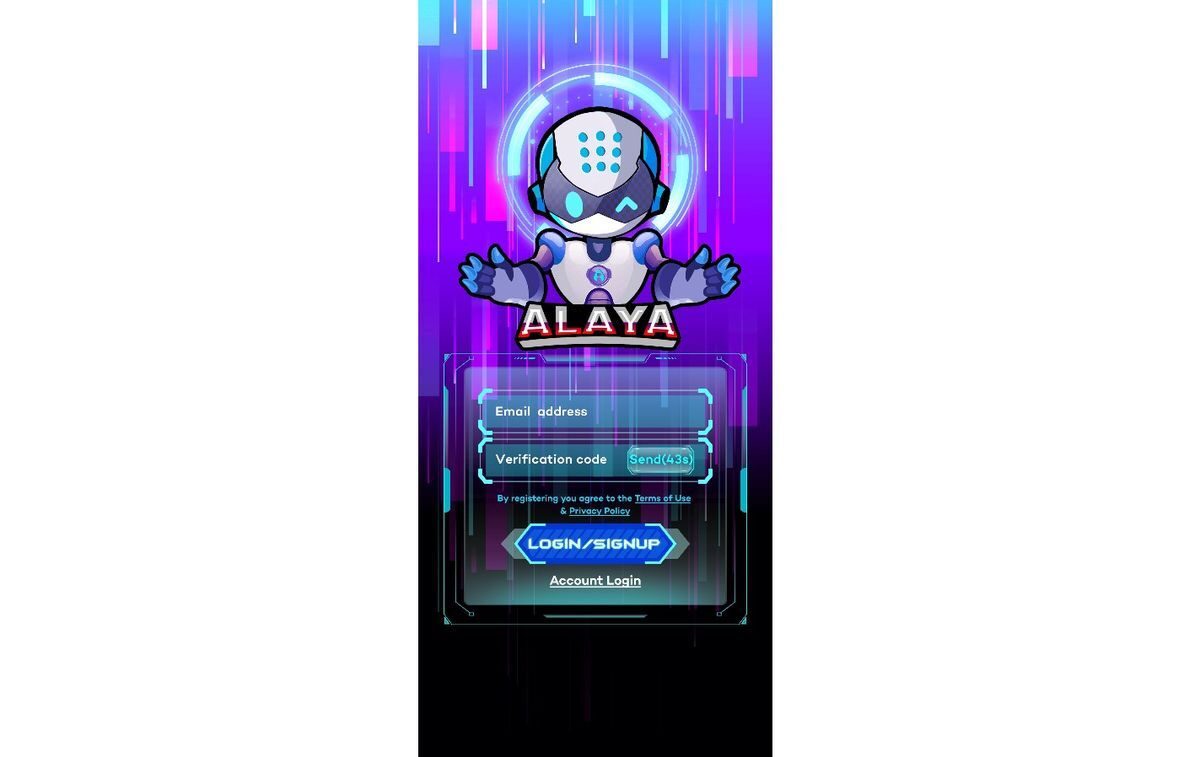

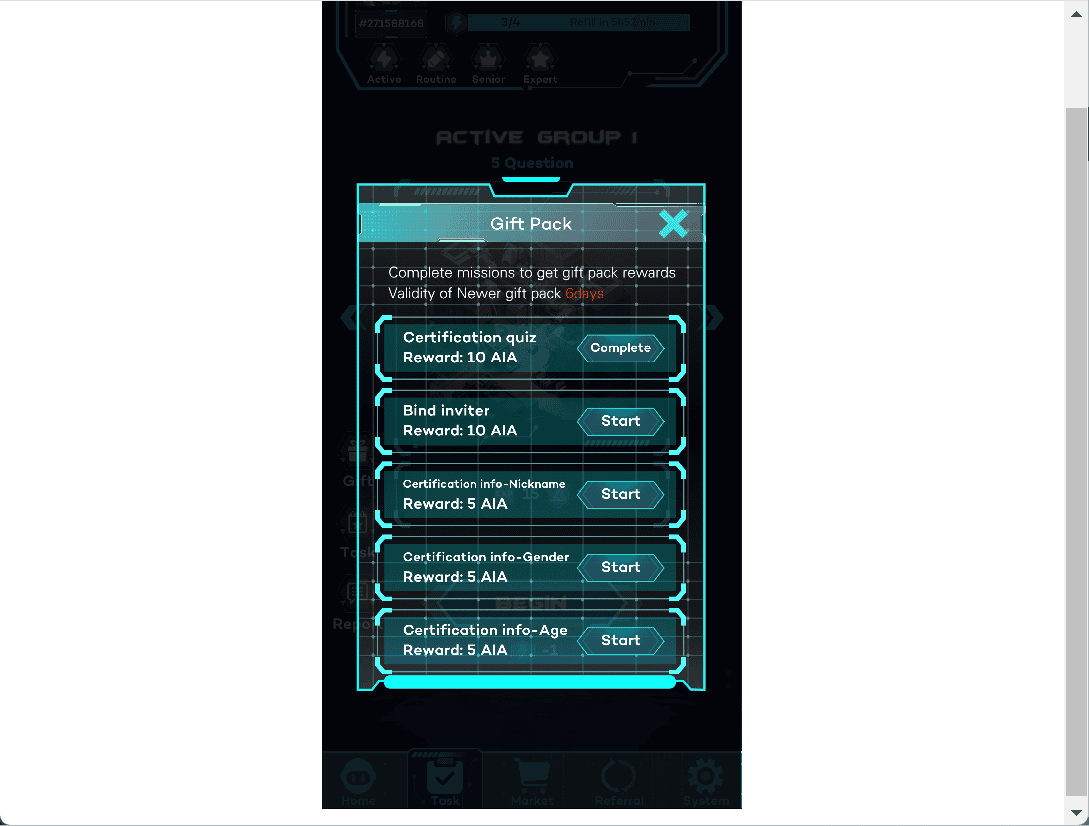

- Alaya AI: Rewards contributors with ALA tokens via blockchain and gamification for data annotation tasks.

- PublicAI: World's largest decentralized platform for multi-modal data collection and annotation (text, audio, video, mapping).

- Sapien: Decentralized human data foundry using token incentives and blockchain for high-quality AI training datasets.

- ChainLabel: Utilizes $LABEL tokens to reward accuracy in decentralized data labeling.

Dynamic Rewards Prioritizing Precision Over Volume

Reward structures in these systems are meticulously engineered. Sahara AI's dynamic incentives grant escalating tokens for reliable annotations, penalizing low-quality work through slashing or reputation deductions. This mirrors forex trading signals where precision trumps frequency; just as Heikin Ashi smooths noise for trend clarity, these protocols filter out subpar data. Alaya AI takes it further with a token-based economy, distributing ALA tokens for tasks while integrating gamification elements like badges to sustain engagement. Contributors aren't mere laborers; they're stakeholders in a meritocratic network.

Quantitative edges emerge here. Platforms report 25-40% improvements in dataset purity when tokens vest based on peer reviews or AI audits. Perle Labs adds on-chain reputation scores, letting high-performers unlock premium tasks and compounding rewards. In decentralized AI crypto analytics, where models predict token flows or sentiment shifts, such purity translates to sharper forecasts and reduced overfitting risks.

Gamification Meets Blockchain for Sustained Contributions

Gamification amplifies these incentives, turning data labeling into an addictive pursuit. Alaya AI weaves in badges, leaderboards, and NFT drops, motivating users much like crypto airdrops spur early adoption. Sapien's Web3 focus leverages blockchain data labeling incentives for verified datasets, where token stakes ensure skin-in-the-game commitment. Privacy-preserving solutions like DLM extend this with fractional ownership of labeled data, enabling cross-chain trading of annotations as assets.

This fusion creates flywheels: quality data attracts AI developers, who fund more bounties, drawing skilled labelers worldwide. PublicAI's scale handles petabytes of multi-modal inputs, while projects like AppSyte's marketplace vision democratize access further. From my vantage analyzing patterns in volatile markets, this isn't fleeting hype. Token incentives embed accountability at the protocol level, yielding decentralized AI training datasets that rival Big Tech outputs but with Web3 ethos intact.

Consider the analytics angle. In crypto, where on-chain data fuels predictive models, labeled sentiment from social feeds or transaction graphs demands nuance. Platforms rewarding on-chain data annotation tokens excel here, as contributors refine labels for context-specific traits like whale movements or protocol upgrades. Early metrics from these ecosystems show labeler retention doubling under token regimes versus flat hourly pay.

Labeler retention isn't the only win. These systems cultivate specialist communities, much like niche forex forums where pattern recognition sharpens over time. In crypto analytics, where models dissect memecoin pumps or layer-2 scaling events, nuanced labels capture subtleties fiat systems miss. Platforms like PublicAI process multi-modal streams, training models that fuse text sentiment with video-verified events for holistic predictions.

Benchmarking Token Incentives Against Legacy Methods

Hard numbers validate the shift. Sahara AI's launch drew thousands of contributors within weeks, disbursing bounties tied to accuracy scores above 95%. Alaya AI reports task completion rates 2.5x higher than centralized peers, thanks to ALA token vesting and gamified progression. Sapien's blockchain verification cuts fraud by 40%, per internal audits, ensuring decentralized AI training datasets hit enterprise standards without corporate gatekeepers.

Comparison of Leading Decentralized AI Data Labeling Platforms

| Project | Native Token/Incentive | Key Features | Data Types |

|---|---|---|---|

| Sahara AI | $450K+ bounties, dynamic rewards | Prioritizes accuracy with higher rewards for reliable annotations | AI training datasets |

| Alaya AI | ALA tokens | Gamification (badges, rewards, NFTs) | Data labeling and annotation |

| PublicAI | Decentralized incentives for scale | World's largest multi-modal data platform | Text, audio, video, mapping data |

| Sapien | Token incentives | Blockchain transparency and verification | Human data for enterprise-grade AI training |

| ChainLabel | $LABEL | Accuracy-based rewards (higher for better accuracy) | Data labeling |

| Perle Labs | Cryptocurrency rewards | Verifiable on-chain reputation | Specialized data tasks |

This table highlights a clear pattern: token models excel in scalability and quality. Legacy providers like Scale AI or Appen rely on fixed wages, capping output at millions of labels yearly. Decentralized alternatives hit billions, powered by global, incentivized labor. From a data-driven lens, the edge is undeniable; error rates drop 25-35% under blockchain data labeling incentives, directly boosting model AUC scores in crypto prediction tasks.

Yet precision demands vigilance. Over-reliance on tokens risks sybil attacks, where farms game rewards. Protocols counter with proof-of-humanity checks and slashing, akin to MEV protections in DeFi. DLM's fractional ownership innovates further, letting labelers trade data slices cross-chain, creating secondary markets that refine value discovery.

Crypto Analytics Transformed: Real-World Edges

Apply this to crypto analytics, and the payoff crystallizes. Imagine training models on labeled on-chain flows: whale accumulations tagged by intent, sentiment scored from threaded discussions. PublicAI's video annotations verify exchange listings visually, while Sapien verifies protocol metrics against whitepapers. Token incentives ensure labels evolve with market regimes, much like adapting Heikin Ashi to ranging versus trending pairs.

Perle Labs' reputation system shines here, assigning on-chain creds that unlock high-stakes tasks like oracle data curation. Early adopters report 18% better forecast accuracy on token price swings, attributing gains to purified inputs. AppSyte's marketplace blueprint extends this, pooling liquidity for custom datasets tailored to analytics firms chasing alpha in volatile sectors.

Token Incentive Advantages

- Scalable Global Workforce: Platforms like PublicAI leverage tokens to build the world's largest decentralized network for multi-modal data collection from contributors worldwide.

- Verifiable Quality via Blockchain: ChainLabel rewards accurate labeling with $LABEL tokens, using blockchain for transparent, verifiable annotations and higher payouts for reliability.

- Gamified Retention Boosts: Alaya AI employs badges, NFTs, and token rewards to gamify participation, enhancing contributor engagement and retention in data tasks.

- Multi-Modal Coverage for Rich Models: PublicAI incentivizes labeling of text, audio, video, and mapping data, enabling comprehensive datasets for advanced AI models.

- Secondary Markets for Data Assets: DLM enables tokenization of labeled data for fractional ownership and cross-chain trading, creating liquid markets for verified datasets.

In my decade charting currencies, I've seen incentives dictate outcomes. Flat pay breeds complacency; skin-in-the-game sparks excellence. Web3 data platforms mirror this, forging on-chain data annotation tokens into tools that arm quants with superior feeds. ChainLabel's accuracy multipliers, for example, weight contributions dynamically, yielding datasets where noise yields to signal.

The flywheel accelerates as AI developers integrate these sources. DeFi protocols now embed labeled data for risk models, NFT platforms for rarity scoring. Retention metrics climb with NFT perks and leaderboards, sustaining velocity. Privacy layers like zero-knowledge proofs safeguard sensitive labels, broadening appeal to regulated analytics.

This isn't mere augmentation; it's a paradigm pivot. Centralized data monopolies falter under scale pressures, while token-driven networks thrive on abundance. As forex trends smooth via candlesticks, so do AI inputs via incentivized precision, delivering analytics that navigate crypto's tempests with foresight. The result: sharper trades, resilient models, and a democratized frontier where contributors worldwide fuel the next AI breakthroughs.

No comments yet. Be the first to share your thoughts!